Data wrangling is the process of gathering, selecting, and transforming data to answer an analytical question. Also known as data cleaning or “munging”, legend has it that this wrangling costs analytics professionals as much as 80% of their time, leaving only 20% for exploration and modelling. Why does it take so long to wrangle the data so that it is usable for analytics?

Howard Diesel, Data Management Professional, Modelware Systems

Howard spoke at the Virtual IRM UK Enterprise Data & Business Intelligence and Analytics Conference Europe co-located with the Data Governance & Master Data Management Summit Europe 3-4 November 2020 on the subject, ‘Timeless Principles Should Remain but Change Everything Else: Fundamentals of Data Warehousing & Business Intelligence‘

“What is Data Wrangling and Why does it take so long?’ Mike Thurber

Executive Summary

The last 5 to 10 years we have seen vendors and data engineers attempting to disrupt the timeless techniques of data integration (ETL, ELT, Data Warehousing & Data Marts) by promising Self-Service analytics and data wrangling.

It takes so long because essential data management principles and techniques must be applied to the raw data to ensure that the data is reliable and supports the analytical techniques required for business decision-making. Raw data that we are now collecting from external sources, to complement our internal data, is messy. Messy data requires more attention and stricter application of the essential principles.

I have used the principle of Data Modelling in the title to ensure that we get Data Reliability By Design and not through the painful process of data maturity.

Instead of disrupting, let us apply and improve the timeless techniques of preparing data appropriately for business decision-support.

Introduction

“Data veracity is the degree to which data is accurate, precise and trusted. We expect data to be certain and reliable. The reality of problem spaces, data sets and operational environments is that data is often uncertain, imprecise and difficult to trust. Related concepts are biases, data lineage, noise, abnormalities, protection, falsification, uncertainty.”

The essential data management principles and capabilities that I am referring to are all the areas of data management as described by the Data Management Body Of Knowledge (DMBOK) published by DAMA International. It is not just Data Warehousing & Integration, and we must apply the principles of Data Modelling, Data Quality and Metadata.

Big Data and Advanced Analytics have undoubtedly had to deal with more significant data veracity challenges than traditional data warehousing. Fortunately, open-source data wrangling packages, R & Python, have provided easy-to-use functions to transform, just about any data format that we receive. Unfortunately, we are still required to apply essential data management techniques to ensure that we have reliable data.

It is also important to note that business decision quality is NOT solely dependent on data. There is a lot more to achieving decision quality than achieving 100% data quality, which is impossible.

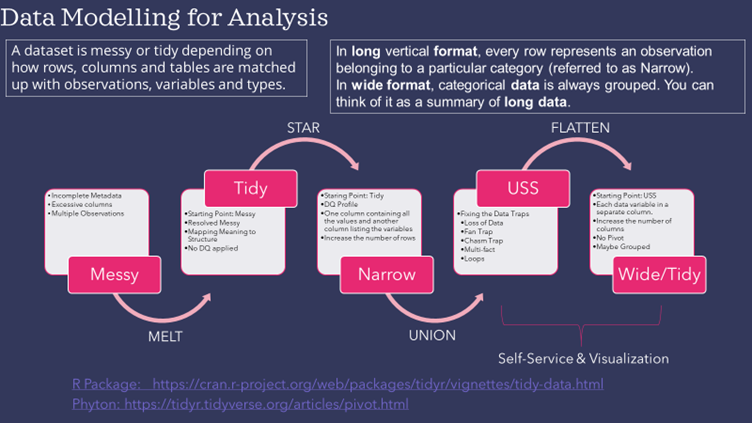

In researching the topic of Data Wrangling, I have found one dominant technique (approach), which I refer to as the “Messy to Tidy” Technique. There have been several papers and open-source packages dedicated to supporting this technique.

The above figure demonstrates how we can extend this approach by adding timeless techniques back in. We have to ensure that the recipe applies the following essential capabilities: Data Governance, Metadata, Data Modelling, Data Quality, Reference & Master Data, Data Warehousing & Business Intelligence. If we ignore these principles, we face the same challenges and disappointments facing Business Intelligence that everybody believes has been replaced by Advanced Analytics.

The table below provides a simple mapping of the data management techniques for the different data formats.

Howard Diesel is a certified data management professional specializing in information governance advisory services. I help organizations establish effective information management programs that deliver business value by enabling capabilities as part of data project delivery. This ensures that only appropriate information management capabilities are established to address existing headaches and subsequent capabilities can be established once the pain has gone and the future can be envisioned. Howard started his career in 1986 as a database administrator and has been actively involved in every information knowledge area defined in the DMBoK, finally settling in information governance. Howard plays an active role in ensuring that business is doing the “right things” and establishing the appropriate information capabilities. After many years of doing “things right” he now focusses on helping people establish information management capabilities to do the right thing and do it right. At this stage he really enjoys working with people excited about data and information and working with community organizations like DAMA and ISO to help address information management issues. He is currently preparing to begin a PhD in Data Handling Ethics. Howard is the current DAMA SA President and working on multiple ISO working groups to understand how Data Governance must change to handle typical 4th Industrial Revolution technologies like AI and Blockchain. Howard is available for consultations and training on any area of information management. You can reach Howard at howard@modelwaresystems.com or president@dama.org.za.

Copyright Howard Diesel, Data Management Professional, Modelware Systems

Paper References

- What is Data Wrangling and Why Does it take So long, Mike Thurber, April 6 2018, https://www.elderresearch.com/blog/what-is-data-wrangling

- 12 Examples of Data Veracity, John Spacey, November 28 2017, https://simplicable.com/new/data-veracity#:~:text=Data%20veracity%20is%20the%20degree,illustrative%20examples%20of%20data%20veracity.

- Tidy Data, Hadley Wickham, RStudio, Journal of Statistical Software, http://vita.had.co.nz/papers/tidy-data.pdf

- The Unified Star Schema: An Agile and Resilient Approach to Data Warehouse and Analytics Design, Bill Inmon, Francesco Puppini, https://www.amazon.com/Unified-Star-Schema-Resilient-Warehouse/dp/163462887X

- Timeless Techniques for Data Warehousing, Howard Diesel, IRM UK Conference Presentation, November 6, 2020.