Well, perhaps not exactly today! But, it was way back in 1986 when I and colleagues in IBM Europe first defined a data warehouse architecture for internal use in managing sales and delivery of System/370 mainframes and System/38 minicomputers. If you recognise those names, I guess you are probably a bit past your thirtieth birthday! I subsequently described the architecture in the IBM Systems Journal in 1988—so, if you want to hold the cake until 2018, that would also work.

Barry Devlin, Founder and Principal, 9sight Consulting. Barry has spoken on many of IRM UK’s conferences. We currently offer his seminar and workshop: Beyond Business Intelligence— Cognitive Decision Making as a 2 or 3 day inhouse course.

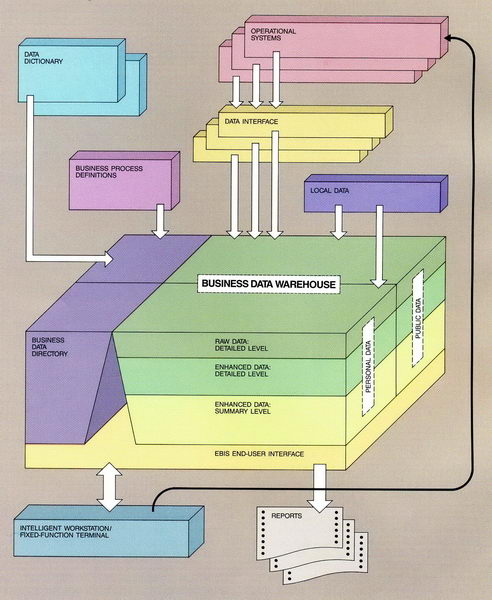

To get an idea of just how much the world has changed in the intervening period, just consider the above technology, as well as the fact that PCs running DOS 3.3 on Intel 80286-based machines with disks as large as 20 MB were considered state-of-the-art. Today’s smartphones are more powerful than the above mainframes. And yet, the data warehouse architecture has changed very little over the same timeframe. The architecture below, from the Systems Journal article, remains the basis for many data warehouse designs today.

Original data warehouse architecture, 1988

With such rapidly changing technology, the longevity of this architecture must point to some fundamental and unchanging principles of data management. They remain true in the face of evolving technologies. Today’s implementations—call them data warehouses or data lakes—must honour these principles and embed them in their designs.

However, recent developments in big data and the Internet of Things (IoT) change business priorities around different aspects of these principles. One key principle—in fact, a trade-off—of data warehousing was consistency vs. timeliness. This says that when inter-related data is created or managed in more than one application or geographical location, the extent of consistency that can be achieved is inversely related to the level of timeliness required. This arises because when data about one object exists in two or more places, the systems managing it must communicate with one another and exchange data to ensure consistency. The faster you want to achieve consistency, the faster and more expensive that communication will be.

In the original data warehouse architecture, we emphasized consistency because that was what the business of the time most wanted. We achieved consistency by reconciling data in the Business Data Warehouse although, as a consequence, we delayed its availability to users. This trade-off was understood and accepted by both business and IT.

But today’s consumers and business users demand instant gratification. Big data and IoT technologies drive and support this demand, and the emphasis has shifted to timeliness. Data lake “architectures” (my quotes imply that many are architectures only in name) focus on high speed data availability. However, few pay much attention to the need for consistency. This need still exists, however, and ends up as work for the data scientists and decision makers using the systems. This original principle of data warehousing, balancing the business needs for consistency and timeliness, must be honoured somewhere in the system, even though the relative priorities have changed.

We still need some component(s) in data architecture to do that—perhaps a data consistency island in the middle of the data lake! But, whatever we choose to call it, the concept and construct of the data warehouse will remain.

Here’s to the next thirty years!

Dr. Barry Devlin is among the foremost authorities on business insight and one of the founders of data warehousing, having published the first architectural paper on the topic in 1988. With over 30 years of IT experience, including 20 years with IBM as a Distinguished Engineer, he is a widely respected analyst, consultant, lecturer and author of the seminal book, “Data Warehouse—from Architecture to Implementation” and numerous White Papers. His 2013 book, “Business unIntelligence—Insight and Innovation beyond Analytics and Big Data” is available in both hardcopy and e-book formats. Barry is founder and principal of 9sight Consulting. He specializes in the human, organizational and IT implications of deep business insight solutions that combine operational, informational and collaborative environments. A regular contributor to journals, blogs and Twitter on the topic of decision making support and business intelligence, Barry is based in Cape Town, South Africa and operates worldwide. He can be contacted at barry@9sight.com

Copyright © 9sight Consulting