Graph databases are one of the fastest growing categories in data management, yet they remain an enigma to many. What can these workhorses do for your enterprise? If you are choosing a platform for a workload, you might consider a graph database if you would describe that workload with any of these words: network, graph, hierarchy, tree, structure, or path.

William McKnight, Consultant, McKnight Consulting Group, williammcknight@verizon.net

William will be presenting the following sessions Master Data Management, Big Data Technology and Use Cases, and Strategies for Consolidating Enterprise Data Warehouses and Data Marts into a Single Platform at our Enterprise Data and BI Conference Europe 2016, 7-10 November, London

This article was previously published here

Graph databases are one of the fastest growing categories in data management, yet they remain an enigma to many. They are part of the NoSQL family because standalone graph databases do not support SQL. However, they are different from other NoSQL databases such as key-value stores, column stores, and document stores; they store a more specific type of data — relationship data — and are less “general purpose.” Graph workloads are among the most decoupled of all workloads.

There are different kinds of graph databases as well. Though all graph databases implement vertices and the edges (relationships) among them, property graphs allow for the addition of “properties” or attributes on the vertices and edges. A graph database can have dozens or <em>billions</em> of vertices and edges. Both extremes can be good uses of graph databases.

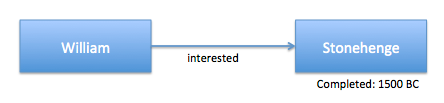

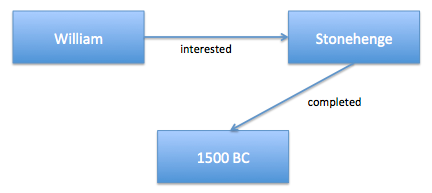

The property graph is the style of graph database that has the most market share. The other main style is the RDF (Resource Descriptive Framework) triple store. It is based on a W3C (World Wide Web Consortium) standard so it has many more participants. To create the equivalent of properties on vertices and edges in an RDF-style database, you create a new relationship from the vertex or edge to a vertex with the property.

For example:

Property graph:

RDF triple store:

Graph databases perform exceptionally well in path analysis (e.g., determine if A is connected to B in 3 hops) because they only process the related vertices whereas relational databases have to look through all the data to determine relationships. You can get close to the same performance in a RDBMS with a robust indexing and query optimization strategy, but with a graph database you know these queries will run well without needing to tune or have indexes just for them.

Of course, graph databases are not just for path analysis. They are also great at determining relative priority of vertices, with the priority based on the value of the vertices’ edges. Perhaps the PageRank algorithm best exemplifies this.

PageRank ranks vertices based on the quality (not quantity) of the inbound relationships and is used by Google to rank websites. How does PageRank determine an inbound link’s quality? By the quality of the inbound links to <em>that</em> vertex, and so on.

Although storage used to be the main point of competition between graph databases, in the past year vendors have been citing the benefits of the language used with the graph. Neo4j, a leading property graph, primarily uses Cypher, which it made open source last year. The RDF stores primarily use SPARQL.

Both have extensive functionality for graph data, including a slew of graph algorithms.

Databases are extending into the graph algorithms as well, making SQL a viable third graph language. In SQL engines such as Teradata Aster, you simply tell the query where the vertices and edges are in the tables. The results come back as tables, too.

We are seeing non-graph (as core) vendors getting into the act as well. DataStax acquired the team behind Titan last year and now has DataStax Graph based on Titan. We are beginning to see the slow return to the all-purpose database of yesteryear. The new winners will ultimately make today’s all-purpose databases seem primitive and will include graph functionality.

William McKnight is President of McKnight Consulting Group (www.mcknightcg.com). He is an internationally recognized authority in information management. His consulting work has included many of the Global 2000 and numerous midmarket companies. His teams have won several best practice competitions for their implementations and many of his clients have gone public with their success stories. His strategies form the information management plan for leading companies in various industries. William is author of the book “Information Management: Strategies for Gaining a Competitive Advantage with Data”. William is a very popular speaker worldwide and a prolific writer with hundreds of articles and white papers published. William is a distinguished entrepreneur, and a former Fortune 50 technology executive and software engineer. He provides clients with strategies, architectures, platform and tool selection, and complete programs to manage information. Follow William on Twitter: @williammcknight.

Copyright: William McKnight, McKnight Consulting Group